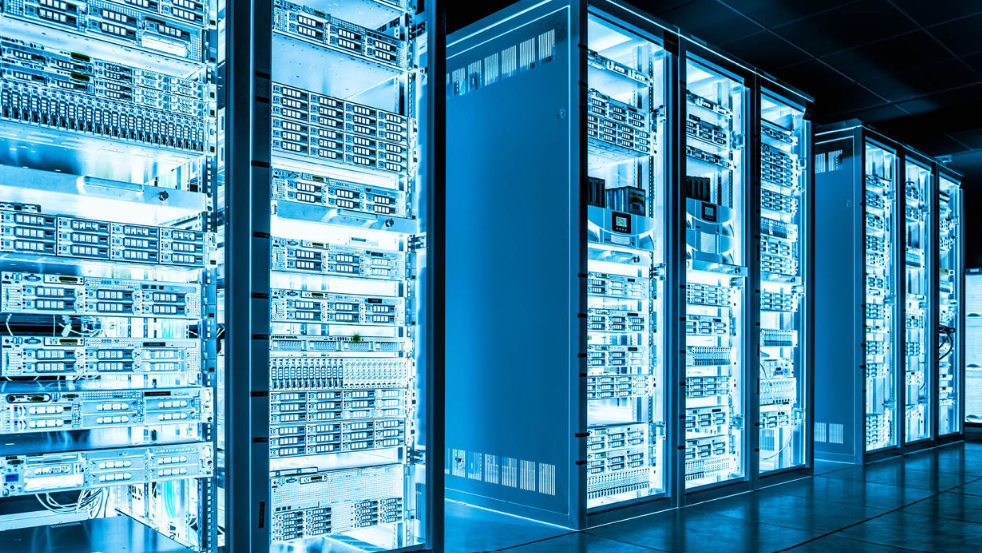

Introduction to Server Performance Issues

Servers are key in enabling the infrastructure of a contemporary organization, whether in terms of internal purposes or externally towards customers. However, even the strongest servers may lose their efficiency in the course of the extended working durations. Although this can be easily ignored, it can cause evident delays, lower reliability, and, in certain situations, downtime.

It is imperative to understand the root causes of these performance issues in order to have a smooth running of the operations. The gradual reduction in server performance can be caused by factors like long utilization with no resets, the rising resource requirements and the rising complexity on the workloads. As systems are maintained without their being shut down and maintained or interfered with, the accrual of small inefficiencies and mismanagement of resources starts to add up.

Long up time may also intensify the lack of efficiency in the server management of resources, including memory and processing power. In the long-term, the resources of mismanaged systems and the lack of routines of routinely executed optimization processes can have an extremely negative effect on responsiveness and processing ability.

By locating these challenges, businesses are able to take the initiative to maintain their servers, in such a way that they will still be able to fulfill the requirements of its operations even in the extended periods of operation.

Resource Depletion Over Time

Since servers work 24 hours on a long-term basis, resource depletion may be experienced slowly affecting their efficiency. Among the most notable problems is the memory leakage where the applications are not able to free the allocated memory to the system even after they do not require the memory.

These leaks may overtime hog much of the memory resources of the server, thus leaving little resources to other important activities. This is capable of causing slow performance, longer loading time as well as possible system instability when unchecked.

The other problem is due to the number of temporary files, caches and logs that will not have automatic clearing. Such files may also consume useful storage space as well as disrupt the capacity of the system to access data effectively. Consequently, the processes that are dependent on fast retrieval of data can be slowed down.

Another resource that is prone to inefficiency with time is processor usage. Some background processes or applications could start consuming excess CPU cycles than what is required to be used to such processes just because the background processes or programs have not been configured appropriately or minor flaws that build up with time in use. Such inefficiency may lead to delays in performing new activities as well as the general sensitivity of the system.

Moreover, the resource allocation mechanisms may deteriorate in their performance in the long-term uptime. An example is that task scheduling or memory management algorithms can be less effective when the system becomes more fragmented or when it is under more demand. Such inefficiencies are also reasons why performance is gradually falling.

Software and System Limitations

The old or inefficiently optimized software may have a huge impact on the work of a server through a long period of operation. Failure to update applications and operating systems frequently can mean that they do not contain critical changes as well as improvements to provide better performance or address any inefficiencies. The result may be a decrease in execution times, error rates and incompatibility with newer workloads or technologies.

Also, software bloat may turn into a serious problem. Systems that are long running will build up unneeded background processes or services, which consume resources such as memory and CPU power. This does not only affect the capacity of the server to accommodate active tasks but it can also cause conflicts of resources being used by applications competing with one another.

Another aspect is compatibility issues. A software stack on a server that contains an older component is possibly not able to integrate well with newer applications or protocols. Such a discrepancy may decrease productivity and create the threat of mistakes in processing the data or communicating with other systems.

In the case of virtualized environments, both hypervisors and virtual machine managers may develop inefficiencies over a long period of operation. In the long-term, these tools would face resource allocation problems especially when virtual machines are not optimized or when their workloads vary dynamically without any resource provisioning changes.

Lastly, even operating systems are not inefficient. There are also OS features that can slow down over time, especially when under heavy load or when system logs and other metadata have become too large. Unchecked, these restrictions may multiply and affect server responsiveness and reliability to a greater extent.

Hardware Wear and Tear

The hardware components of servers can become less efficient with age and it could cause significant drops in performance with time when they are used extensively. Repeated use of old CPUs and memory modules has the effect of diminishing their performance when subjected to high loads, thus leading to low processing speeds and reduced reliability. Gradual deterioration of these parts with time will restrict their capacity to perform tough working challenges as efficiently as before.

Storage devices, including hard drives and solid-state drives (SSD) are very vulnerable to wear due to constant read and write operations. In the case of hard drives, mechanical elements may become worn making access time slower and chance of failure more probable.

Although SSDs are more durable in most instances, they may suffer performance loss as cells reach their write cycle limit forcing their general speed and efficiency downward. The problems are particularly acute in the systems that imply regular access to data since the delay can influence the general operations.

The fans and cooling systems are also likely to deteriorate with the course of time, either by dust and mechanical breakage or by mechanical malfunction and result in increasing working temperatures. Relentless overheating may have a strain on other hardware parts, thus reducing performance and causing severe failure.

Power supplies, like them, could become incapable of giving a steady output, and this will cause variations which will disrupt the server operations. When unattended, these hardware constraints may severely affect the capacity of a server to cater to the operation requirements of a server during prolonged uptime.

Network Bottlenecks

The imbalance in the distribution of traffic or an inefficient use of resources may lead to network bottlenecks, which in turn will delay data flow and adversely affect the work of a server. The bandwidth can be overwhelmed when the applications or the users can cause steady high network traffic. This congestion may cause slow communication between systems impacting on the response rates of applications that are heavily based on real time data exchanges.

The congestion of unoptimized network traffic is one of the causes of bottlenecks. To illustrate, transferring large files, improperly set protocols or overuse of particular applications may occupy the available bandwidth leaving it to be used by other important processes. These inefficiencies can accumulate as time goes by and generate a chain effect that further impairs the network performance.

The problem can also be aggravated by load-balancing strategies that do not consider the changing trends in demand. As an example, when the traffic is not well distributed among the available resources then some servers or network segments can get overloaded as other ones will not be utilized fully. Such imbalance generates excessive load to certain systems, decreasing the speed of transferring data and adding latency.

Moreover, the network hardware may become an issue due to old models of routers or switches that may not be able to support the workloads of the present era. These devices might not be compatible with the latest data transmission standards, or might simply have an insufficient processing power to handle large amounts of traffic. Monitoring and optimization of network components are essential to ensure the smooth functioning of the network in case of the long-term use.

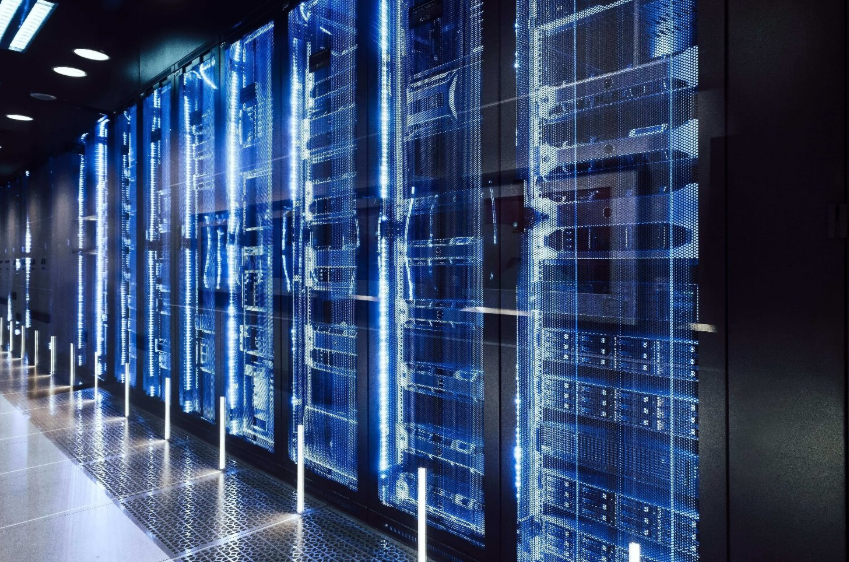

Strategies for Maintaining Optimal Performance

The frequency of optimizing the server is a major aspect of ensuring performance in the long run. Scheduled restarts to clear the temporary files, reset the system processes and release the memory is one of the most effective tactics. Periodic shut down of servers helps to free up inefficiency accumulated over time, like memory leaks or resource fragmentation.

The other beneficial practice is to monitor the trends of resource use with time. Using the performance monitoring tools, the IT teams would notice the uncharacteristic spikes in CPU, memory or disk usage and fix them before they can cause larger problems. It is a proactive tool which allows to make corrections based on the real-time and historical data and prevent the risks of sudden slownesses.

Cleaning up of unnecessary files, e.g. old logs or temporary data, may also be implemented using automated scripts or tools. Deleting such files is a way of keeping free space on the system and avoiding overcrowding which may cause performance.

In the case of virtualized environments, it is important to adjust the resource assignment to virtual machines, particularly in cases where the workloads vary. Regular monitoring of these environments is of use in ensuring that resources get to the right hands to avoid the excessive use of resources by individual virtual machines.

Finally, it is necessary to make sure that airflow and cooling in server rooms are appropriate. The removal of dust and periodical maintenance of the cooling systems can be used to prevent the overheating of the systems that not only protects the hardware but prevents the slowing of the performances due to elevated operating temperatures.

Conclusion

Long uptime of the server may expose certain vulnerabilities in both the software and hardware systems and this is a reminder that there should be regular maintenance cultures. Unless actions are taken, inefficiencies like resources overuse, the presence of outdated software, and network overload may creep into performance and cause slower operations and decreased reliability.

To overcome these issues, a multi-layered solution, such as resource usage monitoring tools, software and operating system updates, and appropriate physical devices such as storage media and cooling systems management, are required.

With the help of being keen and dealing with the performance issues before they grow out of control, the businesses can help prolong the life of the servers and maintain their relevance to the operations.

If performance drops after long uptime, upgrade to OffshoreDedi optimized infrastructure built for sustained stability and speed.